Tree sort – sorting by binary search tree. Time complexity – O(n²). In such a tree, each node has numbers on the left less than the node, on the right greater than the node, when coming from the root and printing values from left to right, we get a sorted list of numbers. Amazing, right?

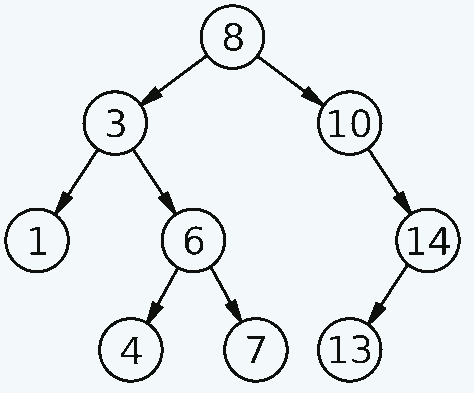

Let’s consider the binary search tree diagram:

Derrick Coetzee (public domain)

Try manually reading the numbers starting from the second to last left node in the lower left corner, for each node on the left – a node on the right.

It will look like this:

- The second to last node on the bottom left is 3.

- It has a left branch – 1.

- We take this number (1)

- Next we take the vertex itself 3 (1, 3)

- On the right is branch 6, but it contains branches. Therefore, we read it in the same way.

- Left branch of node 6 is number 4 (1, 3, 4)

- The node itself is 6 (1, 3, 4, 6)

- Right 7 (1, 3, 4, 6, 7)

- We go up to the root node – 8 (1,3, 4,6, 7, 8)

- Print everything on the right by analogy

- We get the final list – 1, 3, 4, 6, 7, 8, 10, 13, 14

To implement the algorithm in code, two functions are required:

- Building a Binary Search Tree

- Print out the binary search tree in the correct order

A binary search tree is assembled in the same way as it is read, each node is assigned a number on the left or right, depending on whether it is smaller or larger.

Example in Lua:

function Node:new(value, lhs, rhs)

output = {}

setmetatable(output, self)

self.__index = self

output.value = value

output.lhs = lhs

output.rhs = rhs

output.counter = 1

return output

end

function Node:Increment()

self.counter = self.counter + 1

end

function Node:Insert(value)

if self.lhs ~= nil and self.lhs.value > value then

self.lhs:Insert(value)

return

end

if self.rhs ~= nil and self.rhs.value < value then

self.rhs:Insert(value)

return

end

if self.value == value then

self:Increment()

return

elseif self.value > value then

if self.lhs == nil then

self.lhs = Node:new(value, nil, nil)

else

self.lhs:Insert(value)

end

return

else

if self.rhs == nil then

self.rhs = Node:new(value, nil, nil)

else

self.rhs:Insert(value)

end

return

end

end

function Node:InOrder(output)

if self.lhs ~= nil then

output = self.lhs:InOrder(output)

end

output = self:printSelf(output)

if self.rhs ~= nil then

output = self.rhs:InOrder(output)

end

return output

end

function Node:printSelf(output)

for i=0,self.counter-1 do

output = output .. tostring(self.value) .. " "

end

return output

end

function PrintArray(numbers)

output = ""

for i=0,#numbers do

output = output .. tostring(numbers[i]) .. " "

end

print(output)

end

function Treesort(numbers)

rootNode = Node:new(numbers[0], nil, nil)

for i=1,#numbers do

rootNode:Insert(numbers[i])

end

print(rootNode:InOrder(""))

end

numbersCount = 10

maxNumber = 9

numbers = {}

for i=0,numbersCount-1 do

numbers[i] = math.random(0, maxNumber)

end

PrintArray(numbers)

Treesort(numbers)Важный нюанс что для чисел которые равны вершине придумано множество интересных механизмов подцепления к ноде, я же просто добавил счетчик к классу вершины, при распечатке числа возвращаются по счетчику.

Ссылки

https://gitlab.com/demensdeum/algorithms/-/tree/master/sortAlgorithms/treesort

Источники

Convert Sorted Array to Binary Search Tree (LeetCode 108. Algorithm Explained) – YouTube

Sorting algorithms/Tree sort on a linked list – Rosetta Code

How to handle duplicates in Binary Search Tree? – GeeksforGeeks

Tree Sort | GeeksforGeeks – YouTube

Bucket Sort

Bucket Sort – sorting by buckets. The algorithm is similar to counting sort, with the difference that the numbers are collected in “buckets”-ranges, then the buckets are sorted using any other, sufficiently productive, sorting algorithm, and the final chord is the unfolding of the “buckets” one by one, resulting in a sorted list.

The algorithm’s time complexity is O(nk). The algorithm works in linear time for data that obeys a uniform distribution law. To put it simply, the elements must be in a certain range, without “spikes”, for example, numbers from 0.0 to 1.0. If among such numbers there are 4 or 999, then such a series is no longer considered “even” according to the yard laws.

Example of implementation in Julia:

buckets = Vector{Vector{Int}}()

for i in 0:bucketsCount - 1

bucket = Vector{Int}()

push!(buckets, bucket)

end

maxNumber = maximum(numbers)

for i in 0:length(numbers) - 1

bucketIndex = 1 + Int(floor(bucketsCount * numbers[1 + i] / (maxNumber + 1)))

push!(buckets[bucketIndex], numbers[1 + i])

end

for i in 0:length(buckets) - 1

bucketIndex = 1 + i

buckets[bucketIndex] = sort(buckets[bucketIndex])

end

flat = [(buckets...)...]

print(flat, "\n")

end

numbersCount = 10

maxNumber = 10

numbers = rand(1:maxNumber, numbersCount)

print(numbers,"\n")

bucketsCount = 10

bucketSort(numbers, bucketsCount)На производительность алгоритма также влияет число ведер, для большего количества чисел лучше взять большее число ведер (Algorithms in a nutshell by George T. Heineman)

Ссылки

https://gitlab.com/demensdeum/algorithms/-/tree/master/sortAlgorithms/bucketSort

Источники

https://www.youtube.com/watch?v=VuXbEb5ywrU

https://www.youtube.com/watch?v=ELrhrrCjDOA

https://medium.com/karuna-sehgal/an-introduction-to-bucket-sort-62aa5325d124

https://www.geeksforgeeks.org/bucket-sort-2/

https://ru.wikipedia.org/wiki/%D0%91%D0%BB%D0%BE%D1%87%D0%BD%D0%B0%D1%8F_%D1%81%D0%BE%D1%80%D1%82%D0%B8%D1%80%D0%BE%D0%B2%D0%BA%D0%B0

https://www.youtube.com/watch?v=LPrF9yEKTks

https://en.wikipedia.org/wiki/Bucket_sort

https://julialang.org/

https://www.oreilly.com/library/view/algorithms-in-a/9780596516246/ch04s08.html

Radixsort

Radix Sort is a radix sort. The algorithm is similar to counting sort in that there is no comparison of elements, instead, elements are grouped *symbolically* into *buckets*, the bucket is selected by the index of the current number-symbol. Time complexity is O(nd).

It works something like this:

- As input we receive the numbers 6, 12, 44, 9

- Let’s create 10 list buckets (0-9) into which we will add/sort numbers bitwise.

Next:

- Start a loop with counter i until the maximum number of characters in the number

- According to the index i from right to left we get one symbol for each number, if there is no symbol, we consider it zero

- Convert the symbol to a number

- We select a bucket by index – number, put the number there in full

- After we finish iterating over the numbers, we transform all the buckets back into a list of numbers

- We get numbers sorted by rank

- Repeat until all the digits are gone

Radix Sort example in Scala:

import scala.util.Random.nextInt

object RadixSort {

def main(args: Array[String]) = {

var maxNumber = 200

var numbersCount = 30

var maxLength = maxNumber.toString.length() - 1

var referenceNumbers = LazyList.continually(nextInt(maxNumber + 1)).take(numbersCount).toList

var numbers = referenceNumbers

var buckets = List.fill(10)(ListBuffer[Int]())

for( i <- 0 to maxLength) { numbers.foreach( number => {

var numberString = number.toString

if (numberString.length() > i) {

var index = numberString.length() - i - 1

var character = numberString.charAt(index).toString

var characterInteger = character.toInt

buckets.apply(characterInteger) += number

}

else {

buckets.apply(0) += number

}

}

)

numbers = buckets.flatten

buckets.foreach(x => x.clear())

}

println(referenceNumbers)

println(numbers)

println(s"Validation result: ${numbers == referenceNumbers.sorted}")

}

}

The algorithm also has a version for parallel execution, for example on a GPU; there is also a bit sorting variant, which is probably very interesting and truly breathtaking!

Links

https://gitlab .com/demensdeum/algorithms/-/blob/master/sortAlgorithms/radixSort/radixSort.scala

Sources

https://ru.wikipedia.org/wiki/%D0%9F%D0%BE%D1%80%D0%B0%D0%B7%D1%80%D1%8F%D 0%B4%D0%BD%D0%B0%D1%8F_%D1%81%D0%BE%D1%80%D1%82%D0%B8%D1%80%D0%BE%D0%B2%D0% BA%D0%B0

https://www.geeksforgeeks.org/radix-sort/

https://www.youtube.com/watch?v=toAlAJKojos

https://github.com/gyatskov/radix-sort

Heapsort

Heapsort is a pyramid sort. The time complexity of the algorithm is O(n log n), fast, right? I would call this sorting – sorting of falling stones. It seems to me that the easiest way to explain it is visually.

The input is a list of numbers, for example:

5, 0, 7, 2, 3, 9, 4

From left to right, a data structure is made – a binary tree, or as I call it – a pyramid. Pyramid elements can have a maximum of two child elements, but only one top element.

Let’s make a binary tree:

⠀⠀5

⠀0⠀7

2 3 9 4

If you look at the pyramid for a long time, you can see that it is simply numbers from an array, following one after another, the number of elements on each floor is multiplied by two.

Next comes the most interesting part, we sort the pyramid from the bottom up, using the falling pebbles method (heapify). Sorting could start from the last floor (2 3 9 4), but there is no point because there is no floor below to fall to.

Therefore, we begin to drop elements from the penultimate floor (0 7)

⠀⠀5

⠀0⠀7

2 3 9 4

The first element to fall is chosen from the right, in our case it is 7, then we look at what is under it, and under it is 9 and 4, nine is greater than four, and nine is also greater than seven! We drop 7 on 9, and lift 9 to the place of 7.

⠀⠀5

⠀0⠀9

2 3 7 4

Next, we understand that the seven has nowhere to fall lower, we move on to the number 0, which is on the penultimate floor on the left:

⠀⠀5

⠀0⠀9

2 3 7 4

We look at what’s underneath it – 2 and 3, two is less than three, three is greater than zero, so we swap zero and three:

⠀⠀5

⠀3⠀9

2 0 7 4

When you reach the end of the floor, go to the floor above and drop everything there if you can.

The result will be a data structure – a heap, namely a max heap, since the largest element is at the top:

⠀⠀9

⠀3⠀7

2 0 5 4

If you return to the array representation, you will get a list:

[9, 3, 7, 2, 0, 5, 4]

From this we can conclude that by swapping the first and last elements, we will get the first number in the final sorted position, namely 9 should be at the end of the sorted list, swap them:

[4, 3, 7, 2, 0, 5, 9]

Let’s look at a binary tree:

⠀⠀4

⠀3⠀7

2 0 5 9

We have a situation where the bottom of the tree is sorted, we just need to drop 4 to the correct position, we repeat the algorithm, but we do not take into account the already sorted numbers, namely 9:

⠀⠀4

⠀3⠀7

2 0 5 9

⠀⠀7

⠀3⠀4

2 0 5 9

⠀⠀7

⠀3⠀5

2 0 4 9

It turned out that, having dropped 4, we raised the next largest number after 9 – 7. We swap the last unsorted number (4) and the largest number (7)

⠀⠀4

⠀3⠀5

2 0 7 9

It turns out that now we have two numbers in the correct final position:

4, 3, 5, 2, 0, 7, 9

Then we repeat the sorting algorithm, ignoring those already sorted, and as a result we get a heap of the following type:

⠀⠀0

⠀2⠀3

4 5 7 9

Or as a list:

0, 2, 3, 4, 5, 7, 9

Implementation

The algorithm is usually divided into three functions:

- Creating a heap

- Heapify Algorithm

- Replace the last unsorted element with the first

The heap is created by going through the penultimate row of the binary tree using the heapify function, from right to left until the end of the array. Then the first number swap is made in the loop, after which the first element falls/stays in place, as a result of which the largest element gets to the first place, the loop is repeated with the participants decreasing by one, since after each pass at the end of the list there are sorted numbers.

Heapsort example in Ruby:

module Colors

BLUE = "\033[94m"

RED = "\033[31m"

STOP = "\033[0m"

end

def heapsort(rawNumbers)

numbers = rawNumbers.dup

def swap(numbers, from, to)

temp = numbers[from]

numbers[from] = numbers[to]

numbers[to] = temp

end

def heapify(numbers)

count = numbers.length()

lastParentNode = (count - 2) / 2

for start in lastParentNode.downto(0)

siftDown(numbers, start, count - 1)

start -= 1

end

if DEMO

puts "--- heapify ends ---"

end

end

def siftDown(numbers, start, rightBound)

cursor = start

printBinaryHeap(numbers, cursor, rightBound)

def calculateLhsChildIndex(cursor)

return cursor * 2 + 1

end

def calculateRhsChildIndex(cursor)

return cursor * 2 + 2

end

while calculateLhsChildIndex(cursor) <= rightBound

lhsChildIndex = calculateLhsChildIndex(cursor)

rhsChildIndex = calculateRhsChildIndex(cursor)

lhsNumber = numbers[lhsChildIndex]

biggerChildIndex = lhsChildIndex

if rhsChildIndex <= rightBound

rhsNumber = numbers[rhsChildIndex]

if lhsNumber < rhsNumber

biggerChildIndex = rhsChildIndex

end

end

if numbers[cursor] < numbers[biggerChildIndex]

swap(numbers, cursor, biggerChildIndex)

cursor = biggerChildIndex

else

break

end

printBinaryHeap(numbers, cursor, rightBound)

end

printBinaryHeap(numbers, cursor, rightBound)

end

def printBinaryHeap(numbers, nodeIndex = -1, rightBound = -1)

if DEMO == false

return

end

perLineWidth = (numbers.length() * 4).to_i

linesCount = Math.log2(numbers.length()).ceil()

xPrinterCount = 1

cursor = 0

spacing = 3

for y in (0..linesCount)

line = perLineWidth.times.map { " " }

spacing = spacing == 3 ? 4 : 3

printIndex = (perLineWidth / 2) - (spacing * xPrinterCount) / 2

for x in (0..xPrinterCount - 1)

if cursor >= numbers.length

break

end

if nodeIndex != -1 && cursor == nodeIndex

line[printIndex] = "%s%s%s" % [Colors::RED, numbers[cursor].to_s, Colors::STOP]

elsif rightBound != -1 && cursor > rightBound

line[printIndex] = "%s%s%s" % [Colors::BLUE, numbers[cursor].to_s, Colors::STOP]

else

line[printIndex] = numbers[cursor].to_s

end

cursor += 1

printIndex += spacing

end

print line.join()

xPrinterCount *= 2

print "\n"

end

end

heapify(numbers)

rightBound = numbers.length() - 1

while rightBound > 0

swap(numbers, 0, rightBound)

rightBound -= 1

siftDown(numbers, 0, rightBound)

end

return numbers

end

numbersCount = 14

maximalNumber = 10

numbers = numbersCount.times.map { Random.rand(maximalNumber) }

print numbers

print "\n---\n"

start = Time.now

sortedNumbers = heapsort(numbers)

finish = Time.now

heapSortTime = start - finish

start = Time.now

referenceSortedNumbers = numbers.sort()

finish = Time.now

referenceSortTime = start - finish

print "Reference sort: "

print referenceSortedNumbers

print "\n"

print "Reference sort time: %f\n" % referenceSortTime

print "Heap sort: "

print sortedNumbers

print "\n"

if DEMO == false

print "Heap sort time: %f\n" % heapSortTime

else

print "Disable DEMO for performance measure\n"

end

if sortedNumbers != referenceSortedNumbers

puts "Validation failed"

exit 1

else

puts "Validation success"

exit 0

end

Without visualization, this algorithm is not easy to understand, so the first thing I recommend is to write a function that will print the current form of the binary tree.

Links

https://gitlab.com/demensdeum/algorithms/-/blob/master/sortAlgorithms/heapsort/heapsort.rb

Sources

http://rosettacode.org/wiki/Sorting_algorithms/Heapsort

https://www.youtube.com/watch?v=LbB357_RwlY

https://habr.com/ru/company/ otus/blog/460087/

https://ru.wikipedia.org/wiki/Пыramidal_sorting

https://neerc.ifmo.ru/wiki /index.php?title=Heap_sort

https://wiki5.ru/wiki/Heapsort

https://ru.wikipedia.org/wiki/Tree (data structure)

https://ru.wikipedia.org/wiki/Heap (data structure)

https://www.youtube.com/watch?v=2DmK_H7IdTo

https://www.youtube.com/watch?v=kU4KBD4NFtw

https://www.youtube.com/watch?v=DU1uG5310x0

https://www.youtube.com/watch?v =BzQGPA_v-vc

https://www.geeksforgeeks.org/ array-representation-of-binary-heap/

https://habr.com/ru/post/112222/

https://www.cs.usfca. edu/~galles/visualization/BST.html

https://www.youtube.com/watch?v=EQzqHWtsKq4

https://ru.wikibrief.org/wiki/Heapsort

https://www.youtube.com/watch?v=GUUpmrTnNbw

Quicksort

Quicksort is a divide-and-conquer sorting algorithm. Recursively, we sort the array of numbers in parts, placing the numbers in smaller and larger order from the selected pivot element, and insert the pivot element itself into the gap between them. After several recursive iterations, we get a sorted list. Time complexity is O(n2).

Scheme:

- We start by getting the list of elements from the outside, the sorting boundaries. In the first step, the sorting boundaries will be from the beginning to the end.

- We check that the boundaries of the beginning and end do not intersect, if this happens, then it’s time to finish

- We select some element from the list, call it the reference

- Move it to the right at the end to the last index so it doesn’t get in the way

- Create a counter of *smaller numbers* that is still equal to zero

- We go through the list in a loop from left to right, up to the last index where the reference element is located, not inclusive

- Each element is compared with the reference

- If it is less than the reference, then we swap them by the index of the counter of smaller numbers. We increment the counter of smaller numbers.

- When the cycle reaches the reference element, we stop and swap the reference element with the element with the counter of smaller numbers.

- We run the algorithm separately for the left smaller part of the list, and separately for the right larger part of the list.

- Eventually all recursive iterations will start to stop due to the check in point 2

- Get a sorted list

Quicksort was invented by scientist Charles Anthony Richard Hoare at Moscow State University, who, having learned Russian, studied computer translation and probability theory at the Kolmogorov School. In 1960, due to the political crisis, he left the Soviet Union.

Example implementation in Rust:

use rand::Rng;

fn swap(numbers: &mut [i64], from: usize, to: usize) {

let temp = numbers[from];

numbers[from] = numbers[to];

numbers[to] = temp;

}

fn quicksort(numbers: &mut [i64], left: usize, right: usize) {

if left >= right {

return

}

let length = right - left;

if length <= 1 {

return

}

let pivot_index = left + (length / 2);

let pivot = numbers[pivot_index];

let last_index = right - 1;

swap(numbers, pivot_index, last_index);

let mut less_insert_index = left;

for i in left..last_index {

if numbers[i] < pivot {

swap(numbers, i, less_insert_index);

less_insert_index += 1;

}

}

swap(numbers, last_index, less_insert_index);

quicksort(numbers, left, less_insert_index);

quicksort(numbers, less_insert_index + 1, right);

}

fn main() {

let mut numbers = [0, 0, 0, 0, 0, 0, 0, 0, 0, 0];

let mut reference_numbers = [0, 0, 0, 0, 0, 0, 0, 0, 0, 0];

let mut rng = rand::thread_rng();

for i in 0..numbers.len() {

numbers[i] = rng.gen_range(-10..10);

reference_numbers[i] = numbers[i];

}

reference_numbers.sort();

println!("Numbers {:?}", numbers);

let length = numbers.len();

quicksort(&mut numbers, 0, length);

println!("Numbers {:?}", numbers);

println!("Reference numbers {:?}", reference_numbers);

if numbers != reference_numbers {

println!("Validation failed");

std::process::exit(1);

}

else {

println!("Validation success!");

std::process::exit(0);

}

}

If nothing is clear, I suggest watching a video by Rob Edwards from the University of San Diego https://www.youtube.com/watch?v=ZHVk2blR45Q it shows the essence and implementation of the algorithm in the simplest way, step by step.

Links

https://gitlab.com/demensdeum /algorithms/-/tree/master/sortAlgorithms/quickSort

Sources

https://www.youtube.com/watch?v =4s-aG6yGGLU

https://www.youtube.com/watch?v=ywWBy6J5gz8

https://www.youtube.com/watch?v=Hoixgm4-P4M

https://ru.wikipedia.org/wiki/Быстрая_сортировка

https://www.youtube.com/watch?v=Hoixgm4-P4M

https://www.youtube.com/watch?v=XE4VP_8Y0BU

https://www.youtube.com/watch?v=MZaf_9IZCrc

https://www.youtube.com/watch?v=ZHVk2blR45Q

http://rosettacode.org/wiki/Sorting_algorithms/Quicksort

https://www.youtube.com/watch?v=4s-aG6yGGLU

https://www.youtube.com/watch?v=dQw4w9WgXcQ

https://www.youtube.com/watch?v=maibrCbZWKw

https://www.geeksforgeeks.org/quick-sort/

https://www.youtube.com/watch?v=uXBnyYuwPe8

Binary Insertion Sort

Binary Insertion Sort is a variant of insertion sort in which the insertion position is determined using binary search. The time complexity of the algorithm is O(n2)

The algorithm works like this:

- Starts a loop from zero to the end of the list

- In the loop, a number is selected for sorting, the number is saved in a separate variable

- Binary search finds the index to insert this number compared to the numbers to the left

- Once the index is found, the numbers on the left are shifted one position to the right, starting with the insertion index. The process will erase the number you want to sort.

- The previously saved number is inserted at the insertion index

- At the end of the loop, the entire list will be sorted

During binary search, a situation is possible when the number will not be found, but the index is not returned. Due to the peculiarity of binary search, the number closest to the desired one will be found, then to return the index it will be necessary to compare it with the desired one, if the desired one is less, then the desired one should be on the left by index, and if it is greater or equal, then on the right.

Go code:

import (

"fmt"

"math/rand"

"time"

)

const numbersCount = 20

const maximalNumber = 100

func binarySearch(numbers []int, item int, low int, high int) int {

for high > low {

center := (low + high) / 2

if numbers[center] < item { low = center + 1 } else if numbers[center] > item {

high = center - 1

} else {

return center

}

}

if numbers[low] < item {

return low + 1

} else {

return low

}

}

func main() {

rand.Seed(time.Now().Unix())

var numbers [numbersCount]int

for i := 0; i < numbersCount; i++ {

numbers[i] = rand.Intn(maximalNumber)

}

fmt.Println(numbers)

for i := 1; i < len(numbers); i++ { searchAreaLastIndex := i - 1 insertNumber := numbers[i] insertIndex := binarySearch(numbers[:], insertNumber, 0, searchAreaLastIndex) for x := searchAreaLastIndex; x >= insertIndex; x-- {

numbers[x+1] = numbers[x]

}

numbers[insertIndex] = insertNumber

}

fmt.Println(numbers)

}

Links

Sources

https://www.geeksforgeeks.org/binary-insertion- sort/

https://www.youtube.com/watch?v=-OVB5pOZJug

Shell Sort

Shell Sort is a variant of insertion sorting with preliminary combing of the array of numbers.

We need to remember how insertion sort works:

1. The loop starts from zero to the end of the loop, thus the array is divided into two parts

2. For the left part, the second cycle is started, comparing elements from right to left, the smaller element on the right is omitted until a smaller element on the left is found

3. At the end of both cycles, we get a sorted list

Once upon a time, computer scientist Donald Shell was puzzled by how to improve the insertion sort algorithm. He came up with the idea of also going through the array with two cycles, but at a certain distance, gradually reducing the “comb” until it turns into a regular insertion sort algorithm. Everything is really that simple, no pitfalls, we add another cycle to the two cycles on top, in which we gradually reduce the size of the “comb”. The only thing you will need to do is check the distance when comparing, so that it does not go beyond the array.

A really interesting topic is the choice of the sequence of changing the comparison length at each iteration of the first cycle. It is interesting because the performance of the algorithm depends on it.

A table of known variants and time complexity can be found here: https://en.wikipedia.org/wiki/Shellsort#Gap_sequences

Different people were involved in calculating the ideal distance, apparently they were so interested in the topic. Couldn’t they just run Ruby and call the fastest sort() algorithm?

In general, these strange people wrote dissertations on the topic of calculating the distance/gap of the “comb” for the Shell algorithm. I simply used the results of their work and checked 5 types of sequences, Hibbard, Knuth-Pratt, Chiura, Sedgewick.

import time

import random

from functools import reduce

import math

DEMO_MODE = False

if input("Demo Mode Y/N? ").upper() == "Y":

DEMO_MODE = True

class Colors:

BLUE = '\033[94m'

RED = '\033[31m'

END = '\033[0m'

def swap(list, lhs, rhs):

list[lhs], list[rhs] = list[rhs], list[lhs]

return list

def colorPrintoutStep(numbers: List[int], lhs: int, rhs: int):

for index, number in enumerate(numbers):

if index == lhs:

print(f"{Colors.BLUE}", end = "")

elif index == rhs:

print(f"{Colors.RED}", end = "")

print(f"{number},", end = "")

if index == lhs or index == rhs:

print(f"{Colors.END}", end = "")

if index == lhs or index == rhs:

print(f"{Colors.END}", end = "")

print("\n")

input(">")

def ShellSortLoop(numbers: List[int], distanceSequence: List[int]):

distanceSequenceIterator = reversed(distanceSequence)

while distance:= next(distanceSequenceIterator, None):

for sortArea in range(0, len(numbers)):

for rhs in reversed(range(distance, sortArea + 1)):

lhs = rhs - distance

if DEMO_MODE:

print(f"Distance: {distance}")

colorPrintoutStep(numbers, lhs, rhs)

if numbers[lhs] > numbers[rhs]:

swap(numbers, lhs, rhs)

else:

break

def ShellSort(numbers: List[int]):

global ShellSequence

ShellSortLoop(numbers, ShellSequence)

def HibbardSort(numbers: List[int]):

global HibbardSequence

ShellSortLoop(numbers, HibbardSequence)

def ShellPlusKnuttPrattSort(numbers: List[int]):

global KnuttPrattSequence

ShellSortLoop(numbers, KnuttPrattSequence)

def ShellPlusCiuraSort(numbers: List[int]):

global CiuraSequence

ShellSortLoop(numbers, CiuraSequence)

def ShellPlusSedgewickSort(numbers: List[int]):

global SedgewickSequence

ShellSortLoop(numbers, SedgewickSequence)

def insertionSort(numbers: List[int]):

global insertionSortDistanceSequence

ShellSortLoop(numbers, insertionSortDistanceSequence)

def defaultSort(numbers: List[int]):

numbers.sort()

def measureExecution(inputNumbers: List[int], algorithmName: str, algorithm):

if DEMO_MODE:

print(f"{algorithmName} started")

numbers = inputNumbers.copy()

startTime = time.perf_counter()

algorithm(numbers)

endTime = time.perf_counter()

print(f"{algorithmName} performance: {endTime - startTime}")

def sortedNumbersAsString(inputNumbers: List[int], algorithm) -> str:

numbers = inputNumbers.copy()

algorithm(numbers)

return str(numbers)

if DEMO_MODE:

maximalNumber = 10

numbersCount = 10

else:

maximalNumber = 10

numbersCount = random.randint(10000, 20000)

randomNumbers = [random.randrange(1, maximalNumber) for i in range(numbersCount)]

ShellSequenceGenerator = lambda n: reduce(lambda x, _: x + [int(x[-1]/2)], range(int(math.log(numbersCount, 2))), [int(numbersCount / 2)])

ShellSequence = ShellSequenceGenerator(randomNumbers)

ShellSequence.reverse()

ShellSequence.pop()

HibbardSequence = [

0, 1, 3, 7, 15, 31, 63, 127, 255, 511, 1023, 2047, 4095,

8191, 16383, 32767, 65535, 131071, 262143, 524287, 1048575,

2097151, 4194303, 8388607, 16777215, 33554431, 67108863, 134217727,

268435455, 536870911, 1073741823, 2147483647, 4294967295, 8589934591

]

KnuttPrattSequence = [

1, 4, 13, 40, 121, 364, 1093, 3280, 9841, 29524, 88573, 265720,

797161, 2391484, 7174453, 21523360, 64570081, 193710244, 581130733,

1743392200, 5230176601, 15690529804, 47071589413

]

CiuraSequence = [

1, 4, 10, 23, 57, 132, 301, 701, 1750, 4376,

10941, 27353, 68383, 170958, 427396, 1068491,

2671228, 6678071, 16695178, 41737946, 104344866,

260862166, 652155416, 1630388541

]

SedgewickSequence = [

1, 5, 19, 41, 109, 209, 505, 929, 2161, 3905,

8929, 16001, 36289, 64769, 146305, 260609, 587521,

1045505, 2354689, 4188161, 9427969, 16764929, 37730305,

67084289, 150958081, 268386305, 603906049, 1073643521,

2415771649, 4294770689, 9663381505, 17179475969

]

insertionSortDistanceSequence = [1]

algorithms = {

"Default Python Sort": defaultSort,

"Shell Sort": ShellSort,

"Shell + Hibbard" : HibbardSort,

"Shell + Prat, Knutt": ShellPlusKnuttPrattSort,

"Shell + Ciura Sort": ShellPlusCiuraSort,

"Shell + Sedgewick Sort": ShellPlusSedgewickSort,

"Insertion Sort": insertionSort

}

for name, algorithm in algorithms.items():

measureExecution(randomNumbers, name, algorithm)

reference = sortedNumbersAsString(randomNumbers, defaultSort)

for name, algorithm in algorithms.items():

if sortedNumbersAsString(randomNumbers, algorithm) != reference:

print("Sorting validation failed")

exit(1)

print("Sorting validation success")

exit(0)

In my implementation, for a random set of numbers, the fastest gaps are Sedgewick and Hibbard.

mypy

I would also like to mention the static typing analyzer for Python 3 – mypy. It helps to cope with the problems inherent in languages with dynamic typing, namely, it eliminates the possibility of slipping something where it shouldn’t.

As experienced programmers say, “static typing is not needed when you have a team of professionals”, someday we will all become professionals, we will write code in complete unity and understanding with machines, but for now you can use such utilities and languages with static typing.

Links

https://gitlab.com/demensdeum /algorithms/-/tree/master/sortAlgorithms/shellSort

http://mypy-lang.org/

Sources

https://dl.acm.org/doi/10.1145/368370.368387

https://en.wikipedia.org/wiki/Shellsort

http://rosettacode.org/wiki/Sorting_algorithms/Shell_sort

https://ru.wikipedia.org/wiki/Сортировка_Шелла

https://neerc.ifmo.ru/wiki/index.php?title=Сортировка_Шелла

https://twitter.com/gvanrossum/status/700741601966985216

Double Selection Sort

Double Selection Sort is a type of selection sort that should be twice as fast. The vanilla algorithm goes through a double loop over a list of numbers, finds the minimum number and swaps it with the current digit pointed to by the loop at the level above. Double selection sort looks for the minimum and maximum number, then replaces the two digits pointed to by the loop at the level above – two numbers on the left and right. This whole orgy ends when the cursors of the numbers to be replaced meet in the middle of the list, as a result, sorted numbers are obtained to the left and right of the visual center.

The time complexity of the algorithm is similar to Selection Sort – O(n2), but there is supposedly a 30% speedup.

Borderline state

Already at this stage, you can imagine the moment of collision, for example, when the number of the left cursor (minimum number) will point to the maximum number in the list, then the minimum number is rearranged, the maximum number rearrangement immediately breaks. Therefore, all implementations of the algorithm contain a check for such cases, replacing the indices with correct ones. In my implementation, one check was enough:

maximalNumberIndex = minimalNumberIndex;

}Реализация на Cito

Cito – язык либ, язык транслятор. На нем можно писать для C, C++, C#, Java, JavaScript, Python, Swift, TypeScript, OpenCL C, при этом совершенно ничего не зная про эти языки. Исходный код на языке Cito транслируется в исходный код на поддерживаемых языках, далее можно использовать как библиотеку, либо напрямую, исправив сгенеренный код руками. Эдакий Write once – translate to anything.

Double Selection Sort на cito:

{

public static int[] sort(int[]# numbers, int length)

{

int[]# sortedNumbers = new int[length];

for (int i = 0; i < length; i++) {

sortedNumbers[i] = numbers[i];

}

for (int leftCursor = 0; leftCursor < length / 2; leftCursor++) {

int minimalNumberIndex = leftCursor;

int minimalNumber = sortedNumbers[leftCursor];

int rightCursor = length - (leftCursor + 1);

int maximalNumberIndex = rightCursor;

int maximalNumber = sortedNumbers[maximalNumberIndex];

for (int cursor = leftCursor; cursor <= rightCursor; cursor++) { int cursorNumber = sortedNumbers[cursor]; if (minimalNumber > cursorNumber) {

minimalNumber = cursorNumber;

minimalNumberIndex = cursor;

}

if (maximalNumber < cursorNumber) {

maximalNumber = cursorNumber;

maximalNumberIndex = cursor;

}

}

if (leftCursor == maximalNumberIndex) {

maximalNumberIndex = minimalNumberIndex;

}

int fromNumber = sortedNumbers[leftCursor];

int toNumber = sortedNumbers[minimalNumberIndex];

sortedNumbers[minimalNumberIndex] = fromNumber;

sortedNumbers[leftCursor] = toNumber;

fromNumber = sortedNumbers[rightCursor];

toNumber = sortedNumbers[maximalNumberIndex];

sortedNumbers[maximalNumberIndex] = fromNumber;

sortedNumbers[rightCursor] = toNumber;

}

return sortedNumbers;

}

}

Links

https://gitlab.com/demensdeum /algorithms/-/tree/master/sortAlgorithms/doubleSelectionSort

https://github.com/pfusik/cito

Sources

https://www.researchgate.net/publication/330084245_Improved_Double_Selection_Sort_using_Algorithm

http://algolab.valemak.com/selection-double

https://www.geeksforgeeks.org/sorting-algorithm-slightly-improves-selection-sort/

Cocktail Shaker Sort

Cocktail Shaker Sort – a shaker sort, a variant of the bidirectional bubble sort.

The algorithm works as follows:

- The initial direction of iteration in the cycle is selected (usually left-to-right)

- Next, the numbers are checked in pairs in the loop

- If the next element is larger, they swap places

- When finished, the enumeration process starts again with the direction inverted

- The enumeration is repeated until there are no more permutations

The time complexity of the algorithm is similar to the bubble – O(n2).

Example of implementation in PHP:

<?php

function cocktailShakeSort($numbers)

{

echo implode(",", $numbers),"\n";

$direction = false;

$sorted = false;

do {

$direction = !$direction;

$firstIndex = $direction == true ? 0 : count($numbers) - 1;

$lastIndex = $direction == true ? count($numbers) - 1 : 0;

$sorted = true;

for (

$i = $firstIndex;

$direction == true ? $i < $lastIndex : $i > $lastIndex;

$direction == true ? $i++ : $i--

) {

$lhsIndex = $direction ? $i : $i - 1;

$rhsIndex = $direction ? $i + 1 : $i;

$lhs = $numbers[$lhsIndex];

$rhs = $numbers[$rhsIndex];

if ($lhs > $rhs) {

$numbers[$lhsIndex] = $rhs;

$numbers[$rhsIndex] = $lhs;

$sorted = false;

}

}

} while ($sorted == false);

echo implode(",", $numbers);

}

$numbers = [2, 1, 4, 3, 69, 35, 55, 7, 7, 2, 6, 203, 9];

cocktailShakeSort($numbers);

?>Ссылки

Источники

https://www.youtube.com/watch?v=njClLBoEbfI

https://www.geeksforgeeks.org/cocktail-sort/

https://rosettacode.org/wiki/Sorting_algorithms/Cocktail_sort

Sleep Sort

Sleep Sort – sleep sorting, another representative of deterministic strange sorting algorithms.

It works like this:

- Loops through a list of elements

- A separate thread is started for each cycle

- The thread schedules a sleep for the time of the element value and outputs the value after the sleep

- At the end of the cycle, we wait for the thread’s longest sleep to complete, and output the sorted list

Example code for sleep sort algorithm in C:

#include <stdlib.h>

#include <pthread.h>

#include <unistd.h>

typedef struct {

int number;

} ThreadPayload;

void *sortNumber(void *args) {

ThreadPayload *payload = (ThreadPayload*) args;

const int number = payload->number;

free(payload);

usleep(number * 1000);

printf("%d ", number);

return NULL;

}

int main(int argc, char *argv[]) {

const int numbers[] = {2, 42, 1, 87, 7, 9, 5, 35};

const int length = sizeof(numbers) / sizeof(int);

int maximal = 0;

pthread_t maximalThreadID;

printf("Sorting: ");

for (int i = 0; i < length; i++) { pthread_t threadID; int number = numbers[i]; printf("%d ", number); ThreadPayload *payload = malloc(sizeof(ThreadPayload)); payload->number = number;

pthread_create(&threadID, NULL, sortNumber, (void *) payload);

if (maximal < number) {

maximal = number;

maximalThreadID = threadID;

}

}

printf("\n");

printf("Sorted: ");

pthread_join(maximalThreadID, NULL);

printf("\n");

return 0;

}

In this implementation I used the usleep function on microseconds with the value multiplied by 1000, i.e. on milliseconds.

The time complexity of the algorithm is O(very long)

Links

https://gitlab.com/demensdeum /algorithms/-/tree/master/sortAlgorithms/sleepSort

Sources

https://codoholicconfessions.wordpress. com/2017/05/21/strangest-sorting-algorithms/

https://twitter.com/javascriptdaily/status/856267407106682880?lang=en

https://stackoverflow.com/questions/6474318/what-is-the-time-complexity-of-the-sleep-sort

Stalin Sort

Stalin Sort – sorting through, one of the algorithms of sorting with data loss.

The algorithm is very productive and efficient, time complexity is O(n).

It works like this:

- We loop through the array, comparing the current element with the next one

- If the next element is less than the current one, then delete it

- As a result, we get a sorted array in O(n)

Example of the algorithm output:

Gulag: [1, 3, 2, 4, 6, 42, 4, 8, 5, 0, 35, 10]

Element 2 sent to Gulag

Element 4 sent to Gulag

Element 8 sent to Gulag

Element 5 sent to Gulag

Element 0 sent to Gulag

Element 35 sent to Gulag

Element 10 sent to Gulag

Numbers: [1, 3, 4, 6, 42]

Gulag: [2, 4, 8, 5, 0, 35, 10]

Python 3 code:

gulag = []

print(f"Numbers: {numbers}")

print(f"Gulag: {numbers}")

i = 0

maximal = numbers[0]

while i < len(numbers):

element = numbers[i]

if maximal > element:

print(f"Element {element} sent to Gulag")

gulag.append(element)

del numbers[i]

else:

maximal = element

i += 1

print(f"Numbers: {numbers}")

print(f"Gulag: {gulag}")

Among the disadvantages, one can note the loss of data, but if we move towards a utopian, ideal, sorted list in O(n), then how else?

Links

https://gitlab.com/demensdeum /algorithms/-/tree/master/sortAlgorithms/stalinSort

Sources

https://github.com/gustavo-depaula/stalin-sort

https://www.youtube.com/shorts/juRL-Xn-E00

https://www.youtube.com/watch?v=L78i2YcyYfk

Selection Sort

Selection Sort – selection sorting algorithm. Selection of what? The minimum number!!!

The time complexity of the algorithm is O(n2)

The algorithm works as follows:

- We go through the array in a loop from left to right, remember the current starting index and the number by index, we will call the number A

- Inside the loop, we start another one to go from left to right in search of something smaller than A

- When we find the smaller one, we remember the index, now the smaller one becomes the number A

- When the inner loop ends, swap the number at the starting index with the number A

- After the full pass of the upper loop, we get a sorted array

Example of algorithm execution:

(29, 49, 66, 35, 7, 12, 80)

29 > 7

(7, 49, 66, 35, 29, 12, 80)

Round 1 ENDED

Round 2

(7, 49, 66, 35, 29, 12, 80)

49 > 35

35 > 29

29 > 12

(7, 12, 66, 35, 29, 49, 80)

Round 2 ENDED

Round 3

(7, 12, 66, 35, 29, 49, 80)

66 > 35

35 > 29

(7, 12, 29, 35, 66, 49, 80)

Round 3 ENDED

Round 4

(7, 12, 29, 35, 66, 49, 80)

(7, 12, 29, 35, 66, 49, 80)

Round 4 ENDED

Round 5

(7, 12, 29, 35, 66, 49, 80)

66 > 49

(7, 12, 29, 35, 49, 66, 80)

Round 5 ENDED

Round 6

(7, 12, 29, 35, 49, 66, 80)

(7, 12, 29, 35, 49, 66, 80)

Round 6 ENDED

Sorted: (7, 12, 29, 35, 49, 66, 80)

Having failed to find an Objective-C implementation on Rosetta Code, I wrote it myself:

#include <Foundation/Foundation.h>

@implementation SelectionSort

- (void)performSort:(NSMutableArray *)numbers

{

NSLog(@"%@", numbers);

for (int startIndex = 0; startIndex < numbers.count-1; startIndex++) {

int minimalNumberIndex = startIndex;

for (int i = startIndex + 1; i < numbers.count; i++) {

id lhs = [numbers objectAtIndex: minimalNumberIndex];

id rhs = [numbers objectAtIndex: i];

if ([lhs isGreaterThan: rhs]) {

minimalNumberIndex = i;

}

}

id temporary = [numbers objectAtIndex: minimalNumberIndex];

[numbers setObject: [numbers objectAtIndex: startIndex]

atIndexedSubscript: minimalNumberIndex];

[numbers setObject: temporary

atIndexedSubscript: startIndex];

}

NSLog(@"%@", numbers);

}

@end Собрать и запустить можно либо на MacOS/Xcode, либо на любой операционной системе поддерживающей GNUstep, например у меня собирается Clang на Arch Linux.

Скрипт сборки:

main.m \

-lobjc \

`gnustep-config --objc-flags` \

`gnustep-config --objc-libs` \

-I /usr/include/GNUstepBase \

-I /usr/lib/gcc/x86_64-pc-linux-gnu/12.1.0/include/ \

-lgnustep-base \

-o SelectionSort \Links

https://gitlab.com/demensdeum/algorithms/-/tree/master/sortAlgorithms/selectionSort

Sources

https://rosettacode.org/wiki/Sorting_algorithms/Selection_sort

https://ru.wikipedia.org/wiki/Сортировка_выбором

https://en.wikipedia.org/wiki/Selection_sort

https://www.youtube.com/watch?v=LJ7GYbX7qpM

Counting Sort

Counting sort – the algorithm of sorting by counting. What do you mean? Yes! Just like that!

The algorithm involves at least two arrays, the first is a list of integers to be sorted, the second is an array of size = (maximum number – minimum number) + 1, initially containing only zeros. Then the numbers from the first array are sorted, the index in the second array is obtained by the number element, which is incremented by one. After going through the entire list, we get a completely filled second array with the number of repetitions of numbers from the first. The algorithm has a serious overhead – the second array also contains zeros for numbers that are not in the first list, the so-called memory overhead.

After receiving the second array, we iterate over it and write the sorted version of the number by index, decrementing the counter to zero. The initially zero counter is ignored.

An example of unoptimized operation of the counting sort algorithm:

- Input array 1,9,1,4,6,4,4

- Then the array to count will be 0,1,2,3,4,5,6,7,8,9 (minimum number 0, maximum 9)

- With final counters 0,2,0,0,3,0,1,0,0,1

- Total sorted array 1,1,4,4,4,6,9

Algorithm code in Python 3:

numbers = [42, 89, 69, 777, 22, 35, 42, 69, 42, 90, 777]

minimal = min(numbers)

maximal = max(numbers)

countListRange = maximal - minimal

countListRange += 1

countList = [0] * countListRange

print(numbers)

print(f"Minimal number: {minimal}")

print(f"Maximal number: {maximal}")

print(f"Count list size: {countListRange}")

for number in numbers:

index = number - minimal

countList[index] += 1

replacingIndex = 0

for index, count in enumerate(countList):

for i in range(count):

outputNumber = minimal + index

numbers[replacingIndex] = outputNumber

replacingIndex += 1

print(numbers)Из-за использования двух массивов, временная сложность алгоритма O(n + k)

Ссылки

https://gitlab.com/demensdeum/algorithms/-/tree/master/sortAlgorithms/countingSort

Источники

https://www.youtube.com/watch?v=6dk_csyWif0

https://www.youtube.com/watch?v=OKd534EWcdk

https://en.wikipedia.org/wiki/Counting_sort

https://rosettacode.org/wiki/Sorting_algorithms/Counting_sort

https://pro-prof.com/forums/topic/%D0%B0%D0%BB%D0%B3%D0%BE%D1%80%D0%B8%D1%82%D0%BC-%D1%81%D0%BE%D1%80%D1%82%D0%B8%D1%80%D0%BE%D0%B2%D0%BA%D0%B8-%D0%BF%D0%BE%D0%B4%D1%81%D1%87%D0%B5%D1%82%D0%BE%D0%BC

Bogosort

Pseudo-sort or swamp sort, one of the most useless sorting algorithms.

It works like this:

1. An array of numbers is fed to the input

2. An array of numbers is shuffled randomly

3. Check if the array is sorted

4. If not sorted, the array is shuffled again

5. This whole process is repeated until the array is sorted randomly.

As you can see, the performance of this algorithm is terrible, smart people think that even O(n * n!), i.e. there is a chance to get stuck throwing dice for the glory of the god of chaos for many years, the array will still not be sorted, or maybe it will be sorted?

Implementation

To implement it in TypeScript, I needed to implement the following functions:

1. Shuffling an array of objects

2. Comparison of arrays

3. Generate a random number in the range from zero to a number (sic!)

4. Print progress, because it seems like sorting is going on forever

Below is the implementation code in TypeScript:

const randomInteger = (maximal: number) => Math.floor(Math.random() * maximal);

const isEqual = (lhs: any[], rhs: any[]) => lhs.every((val, index) => val === rhs[index]);

const shuffle = (array: any[]) => {

for (var i = 0; i < array.length; i++) { var destination = randomInteger(array.length-1); var temp = array[i]; array[i] = array[destination]; array[destination] = temp; } } let numbers: number[] = Array.from({length: 10}, ()=>randomInteger(10));

const originalNumbers = [...numbers];

const sortedNumbers = [...numbers].sort();

let numberOfRuns = 1;

do {

if (numberOfRuns % 1000 == 0) {

printoutProcess(originalNumbers, numbers, numberOfRuns);

}

shuffle(numbers);

numberOfRuns++;

} while (isEqual(numbers, sortedNumbers) == false)

console.log(`Success!`);

console.log(`Run number: ${numberOfRuns}`)

console.log(`Original numbers: ${originalNumbers}`);

console.log(`Current numbers: ${originalNumbers}`);

console.log(`Sorted numbers: ${sortedNumbers}`);Для отладки можно использовать VSCode и плагин TypeScript Debugger от kakumei.

Как долго

Вывод работы алгоритма:

src/bogosort.ts:1

Still trying to sort: 5,4,8,7,5,0,2,9,7,2, current shuffle 8,7,0,2,4,7,2,5,9,5, try number: 145000

src/bogosort.ts:2

Still trying to sort: 5,4,8,7,5,0,2,9,7,2, current shuffle 7,5,2,4,9,8,0,5,2,7, try number: 146000

src/bogosort.ts:2

Still trying to sort: 5,4,8,7,5,0,2,9,7,2, current shuffle 0,2,7,4,9,5,7,5,8,2, try number: 147000

src/bogosort.ts:2

Still trying to sort: 5,4,8,7,5,0,2,9,7,2, current shuffle 5,9,7,8,5,4,2,7,0,2, try number: 148000

src/bogosort.ts:2

Success!

src/bogosort.ts:24

Run number: 148798

src/bogosort.ts:25

Original numbers: 5,4,8,7,5,0,2,9,7,2

src/bogosort.ts:26

Current numbers: 5,4,8,7,5,0,2,9,7,2

src/bogosort.ts:27

Sorted numbers: 0,2,2,4,5,5,7,7,8,9Для массива из 10 чисел Богосорт перемешивал исходный массив 148798 раз, многовато да?

Алгоритм можно использовать как учебный, для понимания возможностей языка с которым предстоит работать на рынке. Лично я был удивлен узнав что в ванильных JS и TS до сих пор нет своего алгоритма перемешивания массивов, генерации целого числа в диапазоне, доступа к хэшам объектов для быстрого сравнения.

Ссылки

https://gitlab.com/demensdeum/algorithms/-/tree/master/sortAlgorithms/bogosort

https://www.typescriptlang.org/

https://marketplace.visualstudio.com/items?itemName=kakumei.ts-debug

Источники

https://www.youtube.com/watch?v=r2N3scbd_jg

https://en.wikipedia.org/wiki/Bogosort

RGB image to grayscale

In this note I will describe the algorithm for converting an RGB buffer to grayscale.

And this is done quite simply, each pixel of the buffer’s color channel is transformed according to a certain formula and the output is a gray image.

Average method:

red = average;

green = average;

blue = average;Складываем 3 цветовых канала и делим на 3.

Однако существует еще один метод – метод средневзвешенный, он учитывает цветовосприятие человека:

red = luminance;

green = luminance;

blue = luminance;

Какой метод лучше использовать? Да какой вам больше подходит для конкретной задачи. Далее сравнение методов с помощью тестовой цветовой сетки:

Пример реализации на JavaScript + HTML 5

image,

canvas,

weightedAverage

) {

const context = canvas.getContext('2d');

const imageWeight = image.width;

const imageHeight = image.height;

canvas.width = imageWeight;

canvas.height = imageHeight;

context.drawImage(image, 0, 0);

let pixels = context

.getImageData(

0,

0,

imageWeight,

imageHeight

);

for (let y = 0; y & lt; pixels.height; y++) {

for (let x = 0; x & lt; pixels.width; x++) {

const i = (y * 4) * pixels.width + x * 4;

let red = pixels.data[i];

let green = pixels.data[i + 1];

let blue = pixels.data[i + 2]

const average = (red + green + blue) / 3;

const luminance = 0.2126 * red +

0.7152 * green +

0.0722 * blue;

red = weightedAverage ? luminance : average;

green = weightedAverage ? luminance : average;

blue = weightedAverage ? luminance : average;

pixels.data[i] = red;

pixels.data[i + 1] = green;

pixels.data[i + 2] = blue;

}

}

context

.putImageData(

pixels,

0,

0,

0,

0,

pixels.width,

pixels.height

);

}Источники

https://www.baeldung.com/cs/convert-rgb-to-grayscale

https://twitter.com/mudasobwa/status/1528046455587495940

https://rosettacode.org/wiki/Grayscale_image

Ссылки

http://papugi.demensdeum.repl.co/

Благодарности

Спасибо Aleksei Matiushkin (https://twitter.com/mudasobwa) за наводку на Rosetta Code

Longest Common Substring

In this note I will describe an algorithm for solving the longest common substring problem. Let’s say we are trying to decrypt encrypted binary data, let’s first try to find common patterns using the longest substring search.

Input string for example:

adasDATAHEADER??jpjjwerthhkjbcvkDATAHEADER??kkasdf

We are looking for a string that is repeated twice:

DATAHEADER??

Prefixes

First, let’s write a method for comparing the prefixes of two strings, let it return the resulting string in which the characters of the left prefix are equal to the characters of the right prefix.

For example, for the lines:

val lhs = "asdfWUKI"

val rhs = "asdfIKUW"

The resulting string is – asdf

Example in Kotlin:

fun longestPrefix(lhs: String, rhs: String): String {

val maximalLength = min(lhs.length-1, rhs.length -1)

for (i in 0..maximalLength) {

val xChar = lhs.take(i)

val yChar = rhs.take(i)

if (xChar != yChar) {

return lhs.substring(0, i-1)

}

}

return lhs.substring(0,maximalLength)

}

Brute Force

When it doesn’t work out the good way, it’s worth resorting to brute force. Using the longestPrefix method, we’ll go through the string in two loops, the first one takes the string from i to the end, the second one from i + 1 to the end, and passes them to the search for the largest prefix. The time complexity of this algorithm is approximately O(n^2) ~ O(n*^3).

Example in Kotlin:

fun searchLongestRepeatedSubstring(searchString: String): String {

var longestRepeatedSubstring = ""

for (x in 0..searchString.length-1) {

val lhs = searchString.substring(x)

for (y in x+1..searchString.length-1) {

val rhs = searchString.substring(y)

val longestPrefix = longestPrefix(lhs, rhs)

if (longestRepeatedSubstring.length < longestPrefix.length) {

longestRepeatedSubstring = longestPrefix

}

}

}

return longestRepeatedSubstring

}

Suffix array

For a more elegant solution, we need a tool - a data structure called "Suffix Array". This data structure is an array of substrings filled in a loop, where each substring starts from the next character of the string to the end.

For example for the line:

adasDATAHEADER??

The suffix array looks like this:

adasDATAHEADER??

dasDATAHEADER??

asDATAHEADER??

sDATAHEADER??

DATAHEADER??

ATAHEADER??

TAHEADER??

AHEADER??

HEADER??

EADER??

ADER??

DER??

ER??

R??

??

?

Solving by sorting

We sort the suffix array, then we go through all the elements in a loop where the left hand (lhs) contains the current element, the right hand (rhs) contains the next one, and we calculate the longest prefix using the longestPrefix method.

Example in Kotlin:

fun searchLongestRepeatedSubstring(searchString: String): String {

val suffixTree = suffixArray(searchString)

val sortedSuffixTree = suffixTree.sorted()

var longestRepeatedSubstring = ""

for (i in 0..sortedSuffixTree.count() - 2) {

val lhs = sortedSuffixTree[i]

val rhs = sortedSuffixTree[i+1]

val longestPrefix = longestPrefix(lhs, rhs)

if (longestRepeatedSubstring.length < longestPrefix.length) {

longestRepeatedSubstring = longestPrefix

}

}

return longestRepeatedSubstring

}

The time complexity of the algorithm is O(N log N), which is much better than a brute-force solution.

Sources

https://en.wikipedia.org/wiki/Longest_common_substring_problem

Source code

https://gitlab.com/demensdeum/algorithms

Insertion Sort, Merge Sort

Insertion Sort

Insertion Sort – each element is compared to the previous ones in the list and the element is swapped with the larger one if there is one, otherwise the inner comparison loop stops. Since the elements are sorted from first to last, each element is compared to the already sorted list, which *may* reduce the overall running time. The time complexity of the algorithm is O(n^2), i.e. identical to bubble sort.

Merge Sort

Merge sort – the list is divided into groups of one element, then the groups are “merged” in pairs with simultaneous comparison. In my implementation, when merging pairs, the elements on the left are compared with the elements on the right, then moved to the resulting list, if there are no more elements on the left, then all the elements on the right are added to the resulting list (their additional comparison is unnecessary, since all elements in the groups undergo sorting iterations)

The operation of this algorithm is very easy to parallelize, the pair merging stage can be performed in threads, waiting for the end of iterations in the dispatcher.

Output of the algorithm for single-threaded execution:

["John", "Alice", "Mike", "#1", "Артем", "20", "60", "60", "DoubleTrouble"]

[["John"], ["Alice"], ["Mike"], ["#1"], ["Артем"], ["20"], ["60"], ["60"], ["DoubleTrouble"]]

[["Alice", "John"], ["#1", "Mike"], ["20", "Артем"], ["60", "60"], ["DoubleTrouble"]]

[["#1", "Alice", "John", "Mike"], ["20", "60", "60", "Артем"], ["DoubleTrouble"]]

[["#1", "20", "60", "60", "Alice", "John", "Mike", "Артем"], ["DoubleTrouble"]]

["#1", "20", "60", "60", "Alice", "DoubleTrouble", "John", "Mike", "Артем"]

Output of the algorithm for multithreaded execution:

["John", "Alice", "Mike", "#1", "Артем", "20", "60", "60", "DoubleTrouble"]

[["John"], ["Alice"], ["Mike"], ["#1"], ["Артем"], ["20"], ["60"], ["60"], ["DoubleTrouble"]]

[["20", "Артем"], ["Alice", "John"], ["60", "60"], ["#1", "Mike"], ["DoubleTrouble"]]

[["#1", "60", "60", "Mike"], ["20", "Alice", "John", "Артем"], ["DoubleTrouble"]]

[["DoubleTrouble"], ["#1", "20", "60", "60", "Alice", "John", "Mike", "Артем"]]

["#1", "20", "60", "60", "Alice", "DoubleTrouble", "John", "Mike", "Артем"]

The time complexity of the algorithm is O(n*log(n)), which is slightly better than O(n^2)

Sources

https://en.wikipedia.org/wiki/Insertion_sort

https://en.wikipedia.org/wiki/Merge_sort

Source code

https://gitlab.com/demensdeum /algorithms/-/tree/master/sortAlgorithms/insertionSort

https://gitlab.com/demensdeum/algorithms/-/tree/master/sortAlgorithms/mergeSort

Bubble Sort in Erlang

Bubble sort is pretty boring, but it becomes more interesting if you try to implement it in a functional language for telecom – Erlang.

We have a list of numbers, we need to sort it. The bubble sort algorithm goes through the entire list, iterating and comparing numbers in pairs. During the check, the following happens: the smaller number is added to the output list, or the numbers are swapped in the current list; if there is less on the right, the iteration continues with the next number in the iteration. This iteration is repeated until there are no more replacements in the list.

In practice, it is not worth using because of the high time complexity of the algorithm – O(n^2); I implemented it in Erlang, in an imperative style, but if you are interested, you can look for better options:

-module(bubbleSort).

-export([main/1]).

startBubbleSort([CurrentHead|Tail]) ->

compareHeads(CurrentHead, Tail, [], [CurrentHead|Tail]).

compareHeads(CurrentHead, [NextHead|Tail], [], OriginalList) ->

if

CurrentHead < NextHead ->

compareHeads(NextHead, Tail, [CurrentHead], OriginalList);

true ->

compareHeads(CurrentHead, Tail, [NextHead], OriginalList)

end;

compareHeads(CurrentHead, [NextHead|Tail], OriginalOutputList, OriginalList) ->

if

CurrentHead < NextHead ->

OutputList = OriginalOutputList ++ [CurrentHead],

compareHeads(NextHead, Tail, OutputList, OriginalList);

true ->

OutputList = OriginalOutputList ++ [NextHead],

compareHeads(CurrentHead, Tail, OutputList, OriginalList)

end;

compareHeads(CurrentHead, [], OriginalOutputList, OriginalList) ->

OutputList = OriginalOutputList ++ [CurrentHead],

if

OriginalList == OutputList ->

io:format("OutputList: ~w~n", [OutputList]);

true ->

startBubbleSort(OutputList)

end.

main(_) ->

UnsortedList = [69,7,4,44,2,9,10,6,26,1],

startBubbleSort(UnsortedList).

Installation and launch

In Ubuntu, Erlang is very easy to install, just type sudo apt install erlang in the terminal. In this language, each file must be a module, with a list of functions that can be used from the outside – export. Interesting features of the language include the absence of variables, only constants, the absence of standard syntax for OOP (which does not prevent the use of OOP techniques), and of course parallel computations without locks based on the actor model.

You can launch the module either through the interactive console erl, running one command after another, or more simply through escript bubbleSort.erl; The file will look different for different cases, for example, for escript you need to make a main function from which it will start.

Sources

https://www.erlang.org/

https://habr.com/ru/post/197364/

Source code

https://gitlab.com/ demensdeum/algorithms/blob/master/bubbleSort/bubbleSort.erl

Lexicographic comparison algorithm

The lexicographic string comparison algorithm works very simply, in a loop the character codes are compared and the result is returned if the characters are not equal.

An example for the C language can be found here:

https://github.com/gcc-mirror/gcc/blob/master/libiberty/memcmp.c

It should be taken into account that the characters need to be compared in a single static encoding, for example, in Swift I used character-by-character comparison on UTF-32. The option of sorting an array using memcmp will work exactly for single-byte characters, in other cases (variable-length encodings) the order may be incorrect. I do not exclude the possibility of implementation based on variable-length encodings, but most likely it will be an order of magnitude more complicated.

Time complexity of the algorithm is O(1) in the best case, O(n) in the average and worst case

Sources

https://ru.wikipedia.org/wiki/Лексикографические_порядок

Sources

https://gitlab.com/demensdeum /algorithms/blob/master/lexiCompare/lexiCompare.swift

Binary search

Let’s say we need to find out whether the email address “demensdeum@gmail.com” is on the list of email addresses allowed to receive emails.

We’ll go through the entire list from the first to the last element, checking whether the element is equal to the specified address – we’ll implement a linear search algorithm. But it will take a long time, won’t it?

To answer this question, we use the “Time complexity of algorithms”, “O” notation. The worst-case linear search time is equal to the n-th number of array elements, we write this in “O” notation – O(n). Next, we need to explain that for any known algorithm, there are three performance indicators – the best-case, worst-case, and average-case execution time. For example, the email address “demensdeum@gmail.com” is in the first index of the array, then it will be found in the first step of the algorithm, which means that the best-case execution time is – O(1); and if it is at the end of the list, then this is the worst case – O(n)

But what about the software implementation details, hardware performance, they should influence big O, right? Now take a deep breath and imagine that the calculation of time complexity is calculated for some abstract ideal machine, which has only this algorithm and nothing else.

Algorithm

Okay, it turns out that linear search is quite slow, let’s try using Binary Search. First, it should be explained that we will not be working with binary data, this name was given to this method due to the peculiarities of its operation. Initially, we sort the array in lexicographic order, then the algorithm takes the range of the entire array, gets the middle element of the range, compares it lexicographically, and depending on the comparison result decides which range to take for further search – the upper half of the current one or the lower one. That is, at each step of the search a decision is made from two possible ones – binary logic. This step is repeated until either the word is found or not found (the lower and upper indices of the range intersect).

The performance of this algorithm is – the best case when an element in the middle of the array is immediately found is O(1), the worst case of enumeration is O(log n)

Pitfalls

When implementing binary search, I encountered not only an interesting problem of lack of standardization of lexicographic comparison in programming language libraries, but even discovered the absence of a single standard for implementing localeCompare within JavaScript. The ECMAScript standard allows for different implementations of this function, which is why when sorting using localeCompare, completely different results can be observed on different JavaScript engines.

Therefore, for the algorithm to work correctly, it is necessary to sort and use only the same lexicographic comparison algorithm in the work, otherwise nothing will work. But if, for example, you try to sort an array in Scala, and search using nodejs, without implementing your own sorting/sorting of one implementation, then nothing awaits you except disappointment in humanity.

Sources

What is lexicographic comparison and what does it represent?

Почему для вычисления сложности алгоритмов используется log N вместо lb N?

Двоичный поиск

Знай сложности алгоритмов

https://stackoverflow.com/questions/52941016/sorting-in-localecompare-in-javascript

Source code

https://gitlab.com/demensdeum/algorithms