In last article we looked at the theory of the Interpreter pattern, learned what an AST tree is and how to abstract terminal and non-terminal expressions. This time, let’s step away from the theory and see how this pattern is applied in serious commercial projects that we all use every day!

Spoiler: You may be using the Interpreter pattern right now, just by reading this text in your browser!

One of the most striking and, perhaps, the most important examples of the use of this pattern in the industry is JavaScript. The language, which was originally created “on the knee,” today works on billions of devices precisely thanks to the concept of interpretation.

10 days that changed the Internet

The history of JavaScript is full of legends. In 1995, Brendan Eich, while working at Netscape Communications, was given the task of creating a simple scripting language that could run directly in a browser (Netscape Navigator) to make web pages interactive. Management wanted something with a syntax similar to the then super popular Java, but intended not for professional engineers, but for web designers.

Eich had only 10 days to write the first prototype of the language, which was then called Mocha (then LiveScript, and only then JavaScript for marketing reasons). The rush was not accidental: Microsoft was hot on its heels, which at the same time was actively preparing its own scripting language VBScript for embedding in the Internet Explorer browser. Netscape urgently needed to release its response so as not to lose in the looming browser war.

There was simply no time to write a complex compiler into machine code. The obvious and fastest solution for Eich was the architecture of the classic Interpreter.

The first interpreter (SpiderMonkey) worked like this:

- It read the text source code of the script from the page.

- The lexical analyzer broke the text into tokens.

- The parser built an Abstract Syntax Tree (AST). In terms of the Interpreter pattern, this tree consisted of terminal expressions (strings, numbers like 42) and non-terminal (function calls, statements like If, While).

- Then the virtual machine “traversed” this tree step by step, executing the instructions embedded in it at each node (calling a method similar to Interpret()).

Context and Objects

Remember the Context object that we had to pass to the Interpret(Context context) method in the classic implementation? The interpreter needs it to store the current memory state.

In the case of JavaScript, the role of this context at the top level is played by a Global object (for example, window in a browser). When your AST node tries to, say, write text to the screen via document.write(“Hello”), the interpreter accesses its context (the document object) and calls the desired internal browser API.

It is thanks to the interpreter that JavaScript is able to interact so easily with the DOM (Document Object Model) – these are all just objects in a context that are accessed by tree nodes.

Evolution of the interpreter: JIT Compilation

Historically, JS in browsers has long remained a “pure” interpreter. And this had a big disadvantage – slow speed. Parsing the tree and slowly traversing each node each time the script was executed slowed down complex web applications.

With the advent of Google’s V8 engine (built into Chrome) in 2008, a revolution occurred. Engineers realized that one interpreter is not enough for the modern web. The engine has become more complex: it still builds the AST tree, but now uses JIT (Just-In-Time) compilation.

Modern JS engines (V8, SpiderMonkey) work like a complex pipeline:

- The fast and dumb base interpreter starts executing your JS code instantly, without even waiting for it to compile (the classic pattern still works here).

- In parallel, the engine monitors “hot” sections of code (loops or functions that are called thousands of times).

- These sections are compiled by the JIT compiler directly into optimized machine code, bypassing the slow interpreter.

It was this combination of the instant start of the interpreter and the computing power of compilation that allowed JavaScript to take over the world, becoming the language of servers (Node.js) and mobile applications (React Native).

Interpreter in the gaming industry

Despite the dominance of C++ in heavy computing, the Interpreter pattern is an industry standard in game development for creating game logic. For what? So that game designers can make games without the risk of “dropping” the engine or the need to constantly recompile it.

An excellent historical example is UnrealScript – the language in which the logic of the Unreal Tournament and Gears of War games was written in Unreal Engine 1, 2 and 3. The text was compiled into compact abstract machine bytecode, which was then step by step (interpreted) by the engine’s virtual machine.

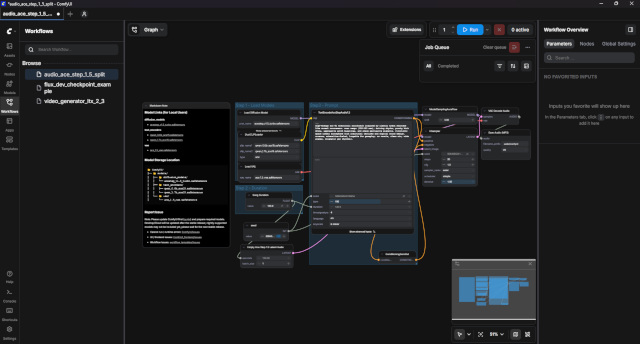

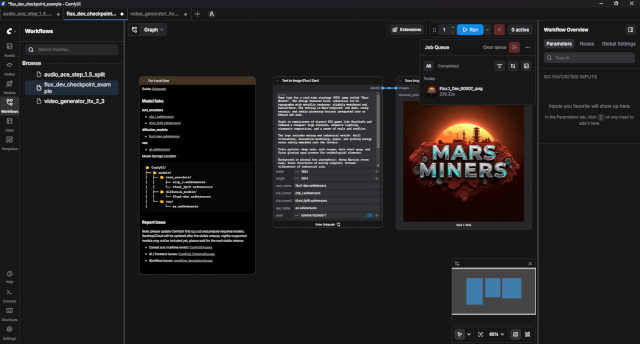

Visual graph scripts (Blueprints)

Today, text has been replaced by visual programming – the Blueprints system in Unreal Engine 4 and 5.

If you’ve ever opened a Blueprint in Unreal Engine, you’ve seen a lot of Nodes connected by wires. Architecturally, the entire Blueprints graph is a huge Abstract Syntax Tree (AST) drawn on the screen:

- Terminal Expressions: Constant nodes. For example, a node that simply stores the number 42 or a string. They return a specific value when interpreted.

- Non-Terminal Expressions: Compute nodes (Add) or flow control nodes (Branch). They have argument inputs, which the interpreter first evaluates recursively before producing the result as an output pin.

And the role of context here is played by the memory of an instance of a specific game object (Actor). The Interpreter Machine safely “walks” through this graph, requesting data and performing transitions.

Where else is the Interpreter used?

The interpreter pattern can be found in almost any complex system where dynamic instructions need to be executed. Here are just a few examples from commercial software:

- Interpreted programming languages (Python, Ruby, PHP). Their entire runtime is based on the classic pattern. For example, the CPython reference implementation first parses your .py script into an AST, compiles it into bytecode, and then a huge virtual machine (compute loop) interprets that bytecode step by step.

- Java Virtual Machine (JVM). Initially, Java code is compiled not into machine instructions, but into bytecode. When you run the application, the JVM acts as an interpreter (albeit with aggressive JIT compilation, just like in V8).

- Databases and SQL When you issue an SQL query (SELECT * FROM users) in PostgreSQL or MySQL, the database engine acts as an interpreter. It performs lexical analysis, builds an AST query tree, generates an execution plan, and then literally “interprets” this plan by iterating over the rows of the tables.

- Regular expressions (RegEx). Any regular expression engine internally parses a string pattern (for example, ^\d{3}-\d{2}$) into a state graph (NFA/DFA Automata), which the internal interpreter then passes through, matching each input character with the vertices of this graph.

- Unity Shader Graph / Unreal Material Editor – interpret visual nodes into modular shader code (GLSL/HLSL).

- Blender Geometry Nodes – interpret mathematical and geometric operations to procedurally generate 3D models in real time.

Total

The Interpreter pattern has long gone beyond the scope of “writing your own calculator”. This is the most powerful industry standard. From JavaScript engines that execute gigabytes of code behind the scenes of browsers every day, to game designers that allow you to build complex logic without knowledge of C++, interpreters remain one of the most important architectural concepts in modern IT development.