In this article I will describe the Iterator pattern.

This pattern refers to the behavioral design patterns.

Print it

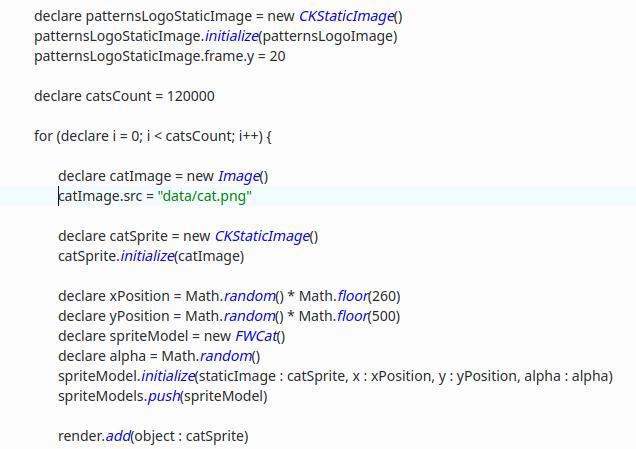

Suppose we need to print a list of tracks from the album “Procrastinate them all” of the group “Procrastinallica”.

The naive implementation (Swift) looks like this:

for i=0; i < tracks.count; i++ { print(tracks[i].title) }

Suddenly during compilation, it is detected that the class of the tracks object does not give the number of tracks in the count call, and moreover, its elements cannot be accessed by index. Oh…

Filter it

Suppose we are writing an article for the magazine “Wacky Hammer”, we need a list of tracks of the group “Djentuggah” in which bpm exceeds 140 beats per minute. An interesting feature of this group is that its records are stored in a huge collection of underground groups, not sorted by albums, or for any other grounds. Let’s imagine that we work with a language without functionality:

var djentuggahFastTracks = [Track]() for track in undergroundCollectionTracks { if track.band.title == "Djentuggah" && track.info.bpm == 140 { djentuggahFastTracks.append(track) } }

Suddenly, a couple of tracks of the group are found in the collection of digitized tapes, and the editor of the magazine suggests finding tracks in this collection and writing about them. A Data Scientist friend suggests to use the Djentuggah track classification algorithm, so you don’t need to listen to a collection of 200 thousand tapes manually. Try:

var djentuggahFastTracks = [Track]() for track in undergroundCollectionTracks { if track.band.title == "Djentuggah" && track.info.bpm == 140 { djentuggahFastTracks.append(track) } } let tracksClassifier = TracksClassifier() let bpmClassifier = BPMClassifier() for track in cassetsTracks { if tracksClassifier.classify(track).band.title == "Djentuggah" && bpmClassifier.classify(track).bpm == 140 { djentuggahFastTracks.append(track) } }

Mistakes

Now, just before sending to print, the editor reports that 140 beats per minute are out of fashion, people are more interested in 160, so the article should be rewritten by adding the necessary tracks.

Apply changes:

var djentuggahFastTracks = [Track]() for track in undergroundCollectionTracks { if track.band.title == "Djentuggah" && track.info.bpm == 160 { djentuggahFastTracks.append(track) } } let tracksClassifier = TracksClassifier() let bpmClassifier = BPMClassifier() for track in cassetsTracks { if tracksClassifier.classify(track).band.title == "Djentuggah" && bpmClassifier.classify(track).bpm == 140 { djentuggahFastTracks.append(track) } }

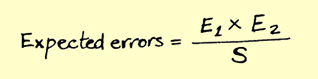

The most attentive ones noticed an error; the bpm parameter was changed only for the first pass through the list. If there were more passes through the collections, then the chance of a mistake would be higher, that is why the DRY principle should be used. The above example can be developed further, for example, by adding the condition that you need to find several groups with different bpm, by the names of vocalists, guitarists, this will increase the chance of error due to duplication of code.

Behold the Iterator!

In the literature, an iterator is described as a combination of two protocols / interfaces, the first is an iterator interface consisting of two methods – next(), hasNext(), next() returns an object from the collection, and hasNext() reports that there is an object and the list is not over. However in practice, I observed iterators with one method – next(), when the list ended, null was returned from this object. The second is a collection that should have an interface that provides an iterator – the iterator() method, there are variations with the collection interface that returns an iterator in the initial position and in end – the begin() and end() methods are used in C ++ std.

Using the iterator in the example above will remove duplicate code, eliminate the chance of mistake due to duplicate filtering conditions. It will also be easier to work with the collection of tracks on a single interface – if you change the internal structure of the collection, the interface will remain old and the external code will not be affected.

Wow!

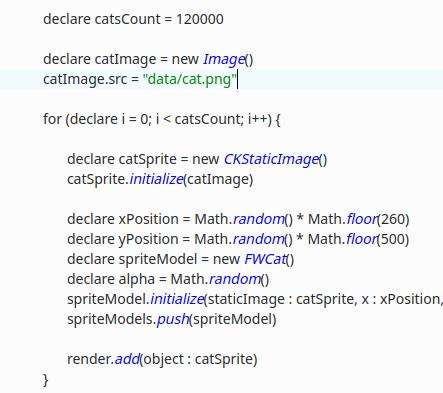

let bandFilter = Filter(key: "band", value: "Djentuggah") let bpmFilter = Filter(key: "bpm", value: 140) let iterator = tracksCollection.filterableIterator(filters: [bandFilter, bpmFilter]) while let track = iterator.next() { print("\(track.band) - \(track.title)") }

Changes

While the iterator is running, the collection may change, thus causing the iterator’s internal counter to be invalid, and generally breaking such a thing as “next object”. Many frameworks contain a check for changing the state of the collection, and in case of changes they return an error / exception. Some implementations allow you to remove objects from the collection while the iterator is running, by providing the remove() method in the iterator.

Documentation

https://refactoring.guru/en/design-patterns/iterator

Source code

https://gitlab.com/demensdeum/patterns/